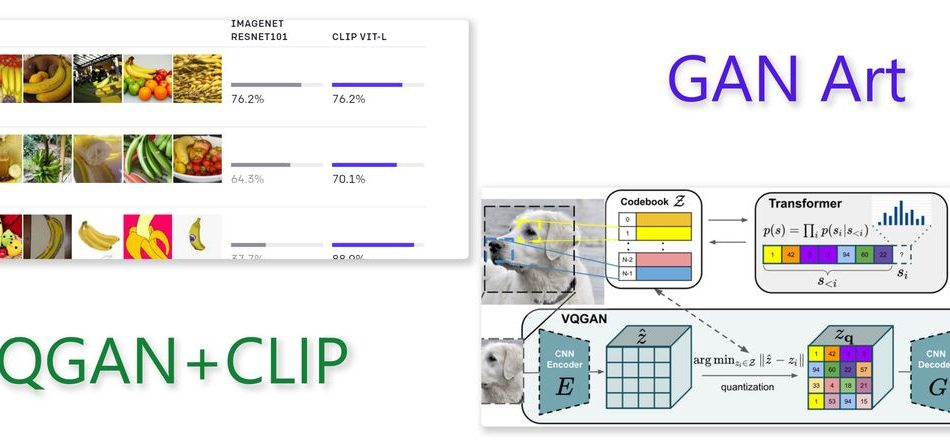

When it took social media by storm last year, I checked it out and was fascinated with how much machine learning techniques have progressed in the last ten years. I am talking about the VQGAN+CLIP, a text-to-image generation model released by Katherine Crowson as a Google Colab Notebook. Suddenly when reading an article I got reminded of this, and spend some time trying with various captions and I am sharing the images I got.

VQGAN+CLIP is a text-to-image model that generates images, given a set of text prompts. The field is called synthetic imagery (“GAN Art”). Here, VQGAN is the image generator, CLIP is the “natural language steering wheel” that guides the generator to produce images that best match the given prompt (caption). You can read more on this topic from the Berkeley blog, VQGAN+CLIP, how does it work?, and How I Made this Article’s Cover Photo.

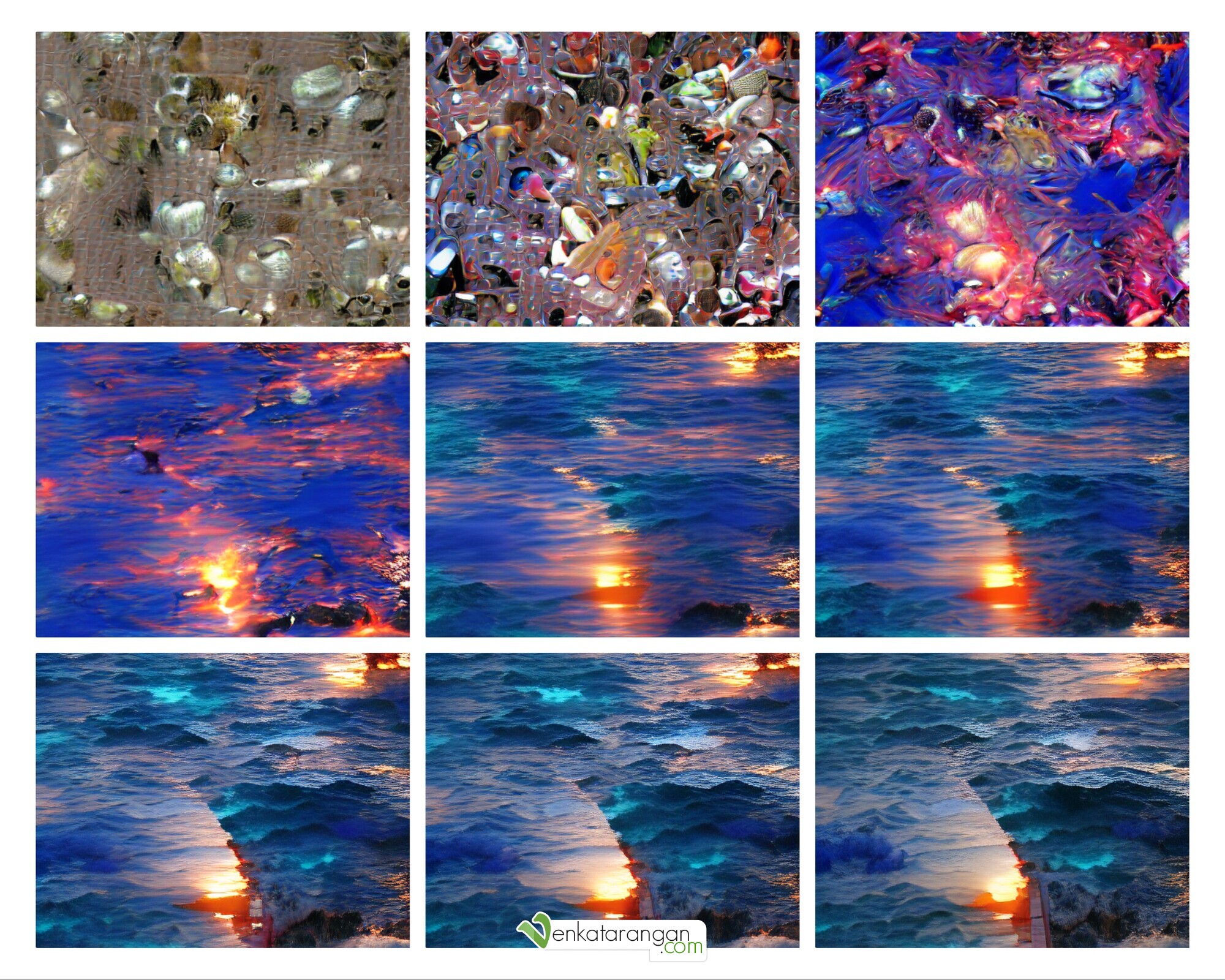

See below the outputs I got for captions: “the sunset and the blue waters” & “South India village”. In the collages, the individual images from various iterations progress from left to right and top to bottom.

Generated images for the caption “South India village” with VQGAN and OpenAI CLIP, machine learning frameworks.

Generated images for the caption “the sunset and the blue waters” with VQGAN and OpenAI CLIP, machine learning frameworks.

If I add the words “UNREAL Engine” to the second caption, a hack, the image produced starts getting more interesting.

Generated images for the caption “South India village, Unreal Engine” with VQGAN and OpenAI CLIP, machine learning frameworks.

I used the Google Colab notebook by Katherine Crowson, artist and mathematician. You too can try this easily for free with no setup needed at your end – just change the prompt and run the Colab notebook. (Instructions to use it are here in this blog). Give it a spin. Search for #VQGAN #GANART on Twitter for inspiration, and you will find an unlimited number of computer-generated images being shared.

Comments